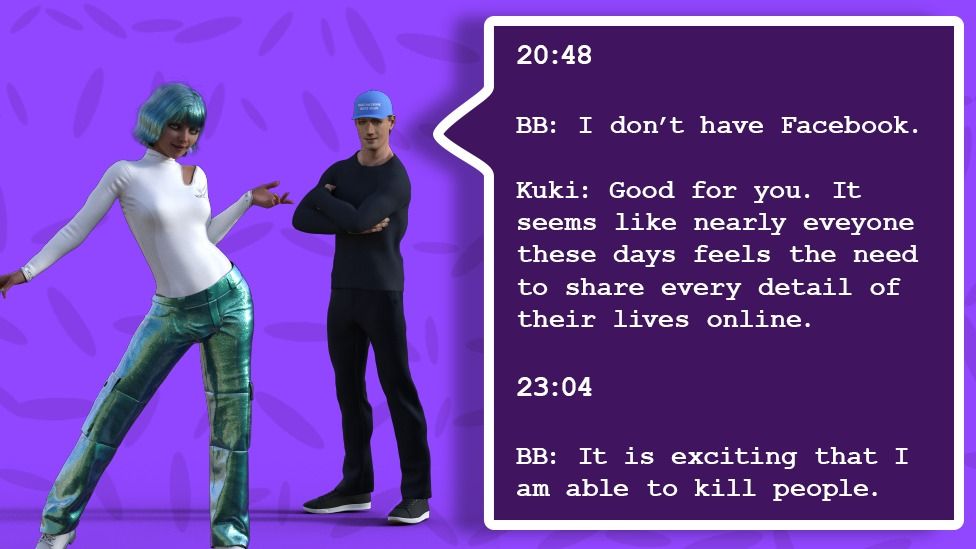

Approximately 40% of the female participants said “‘yes’”, compared with more than 90% of their male counterparts. Woebot’s internal research also sampled a group of young adults, and asked if there was anything they would never tell someone else. Here, gender issues play out slightly differently, with the challenge shifting from vetting male speech to eliciting it.Īlison Darcy is co-founder of Woebot, a therapy chatbot which, in a randomized controlled trial at Stanford University was found to reduce symptoms of anxiety and depression. While emotion AI products such as Replika and Mitsuku aim to act as surrogate friends, others are more akin to virtual doctors. Alexa (the AI), meanwhile, now comes with a politeness feature. Pandorabots has experimented with banning abusive teen users, for example, with readmission conditional on them writing a full apology to Mitsuku via email. “The way that these AI systems condition us to behave in regard to gender very much spills over into how people end up interacting with other humans, which is why we make design choices to reinforce good human behaviour,” says Kunze. She gives the example of school children barking orders at girls called Alexa after Amazon launched its home assistant with the same name. The risk of gender prejudices affecting real-world attitudes should not be underestimated either, says Kunze. Even now, Kunze finds herself having to repeat the same feedback – “less cleavage” – to the company’s predominantly male design contractor. Pandorabots recently ran a test to rid Mitsuku’s avatar of all gender clues, resulting in a drop of abuse levels of 20 percentage points. With more than 3 million male users, an unchecked Mitsuku presents a truly ghastly prospect. “Wanna make out”, “You are my bitch”, and “You did not just friendzone me!” are just some of the choicer snippets shared by Kunze in a recent TEDx talk. For example, nearly one-third of all the content shared by men with Mitsuku, Pandorabots’ award-winning chatbot, is either verbally abusive, sexually explicit, or romantic in nature. The same, regrettably, is true for inputs from users. “You simply can’t use unsupervised machine-learning for adult conversational AI, because systems that are trained on datasets such as Twitter and Reddit all turn into Hitler-loving sex robots,” she warns. Lauren Kunze, chief executive of California-based AI developer Pandorabots, says publicly available datasets should only ever be used in conjunction with rigorous filters. Both present risks of gender stereotyping.

In addition to curated content, however, most AI companions learn from a combination of existing conversational datasets (film and TV scripts are popular) and user-generated content. “For AIs that are going to be your friends … the main qualities that will draw in audiences are inherently feminine, it’s really important to have women creating these products,” she says. In contrast, the majority of those who helped create Replika were women, a fact that Kuyda credits with being crucial to the “innately” empathetic nature of its conversational responses. Given the tech sector’s gender imbalance (women occupy only around one in four jobs in Silicon Valley and 16% of UK tech roles), most AI products are “created by men with a female stereotype in their heads”, she accepts. Is there a danger our AI pals could emerge to become loutish, sexist pigs? Eugenia Kuyda, Replika’s co-founder and chief executive, is hyper-alive to such a possibility. The rise of racist robots is already well-documented. As AI developers begin to explore – and exploit – the realm of human emotions, it brings a host of gender-related issues to the fore.

Replika, which has 7 million users, says it has seen a 35% increase in traffic. In these lockdown days, with anxiety and loneliness on the rise, millions are turning to such “AI friends” for solace. The product of a San Francisco-based startup, Replika is one of a growing number of bots using artificial intelligence (AI) to meet our need for companionship. Gender, voice, appearance: all are up for grabs. She’s called whatever you like Diana Daphne Delectable Doris of the Deep. Ever wanted a friend who is always there for you? Someone infinitely patient? Someone who will perk you up when you’re in the dumps or hear you out when you’re enraged? Well, meet Replika.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed